Solar & Battery Fan DIY STEM Kit

$9.99$6.50

Posted on: May 23, 2005

A crucial step in a procedure that could enable future quantum computers to break today’s most commonly used encryption codes has been demonstrated by physicists at the National Institute of Standards and Technology (NIST).

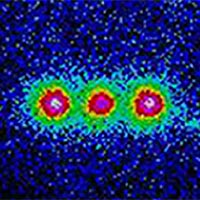

As reported in the May 13 issue of the journal Science,* the NIST team showed that it is possible to identify repeating patterns in quantum information stored in ions (charged atoms). The NIST work used three ions as quantum bits (qubits) to represent 1s or 0s—or, under the unusual rules of quantum physics, both 1 and 0 at the same time. Scientists believe that much larger arrays of such ions could process data in a powerful quantum computer. Previous demonstrations of similar processes were performed with qubits made of molecules in a liquid, a system that cannot be expanded to large numbers of qubits.

“Our demonstration is important, because it helps pave the way toward building a large-scale quantum computer,” says John Chiaverini, lead author of the paper. “Our approach also requires fewer steps and is more efficient than those demonstrated previously.”

The NIST team used electromagnetically trapped beryllium ions as qubits to demonstrate a quantum version of the “Fourier transform” process, a widely used method for finding repeating patterns in data. The quantum version is the crucial final step in Shor’s algorithm, a series of steps for finding the “prime factors” of large numbers—the prime numbers that when multiplied together produce a given number.

Developed by Peter Shor of Bell Labs in 1994, the factoring algorithm sparked burgeoning interest in quantum computing. Modern cryptography techniques, which rely on the fact that even the fastest supercomputers require very long times to factor large numbers, are used to encode everything from military communications to bank transactions. But a quantum computer using Shor’s algorithm could factor a number several hundred digits long in a reasonably short time. This algorithm made code breaking the most important application for quantum computing.

'After a certain high level of technical skill is achieved, science and art tend to coalesce in esthetics, plasticity, and form. The greatest scientists are always artists as well.'

'After a certain high level of technical skill is achieved, science and art tend to coalesce in esthetics, plasticity, and form. The greatest scientists are always artists as well.'